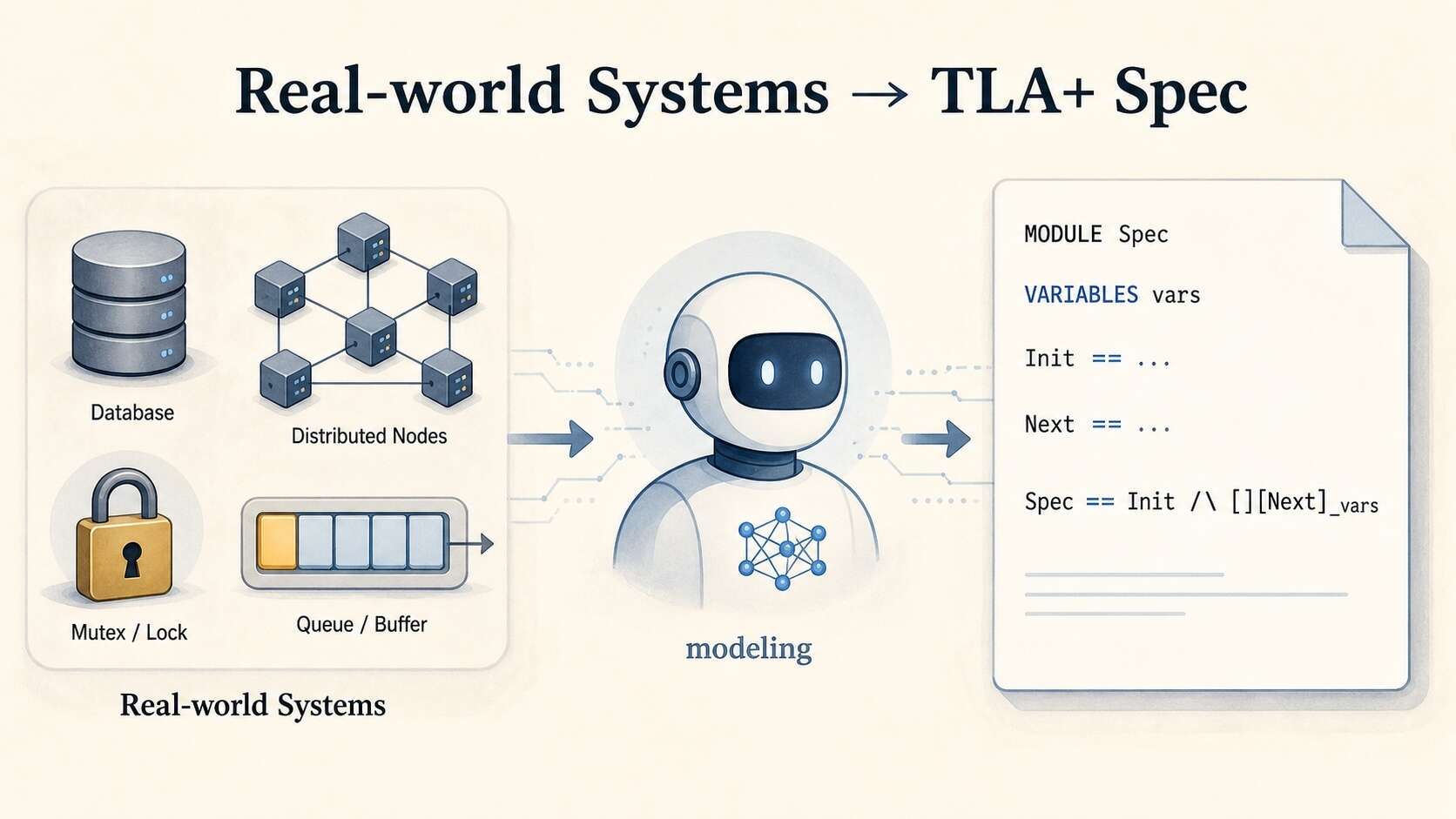

Can LLMs model real-world systems in TLA+?

The Specula team benchmarked frontier LLMs on generating TLA+ specs from real system code—finding near-perfect syntax scores mask catastrophic conformance failures on distributed systems.

What Matters

- SysMoBench evaluates 11 real systems (Etcd, ZooKeeper, RedisRaft, CURP) across four phases: syntax, runtime, conformance, invariant.

- Frontier LLMs average ~46% conformance and ~41% invariant scores despite near-100% syntax scores.

- Claude reproduced the Raft paper appendix spec when asked for Etcd’s spec—a concrete recitation-vs-modeling failure.

- Claude Sonnet 4.6 scores only 25% overall on RedisRaft and CURP, among the hardest systems.

- Two systematic failure modes: specs enter states real systems never reach, and miss states real systems always reach.

- ZooKeeper FLE example: Claude used set union for recvset instead of map-overwrite, admitting impossible multi-vote states.

- Specula, their TLA+ agent built on Claude Code/Codex, achieves full conformance and invariant scores on current SysMoBench tasks.